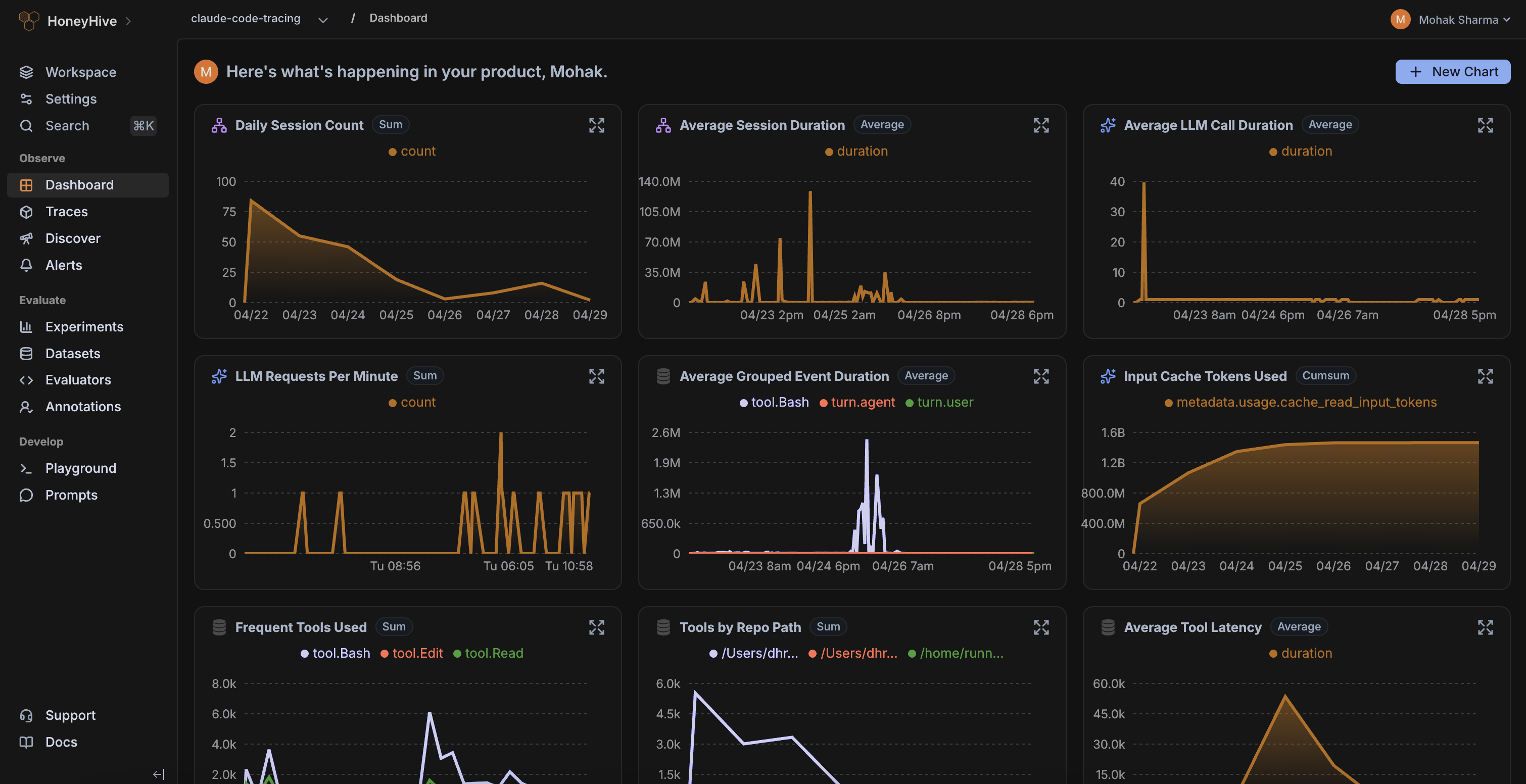

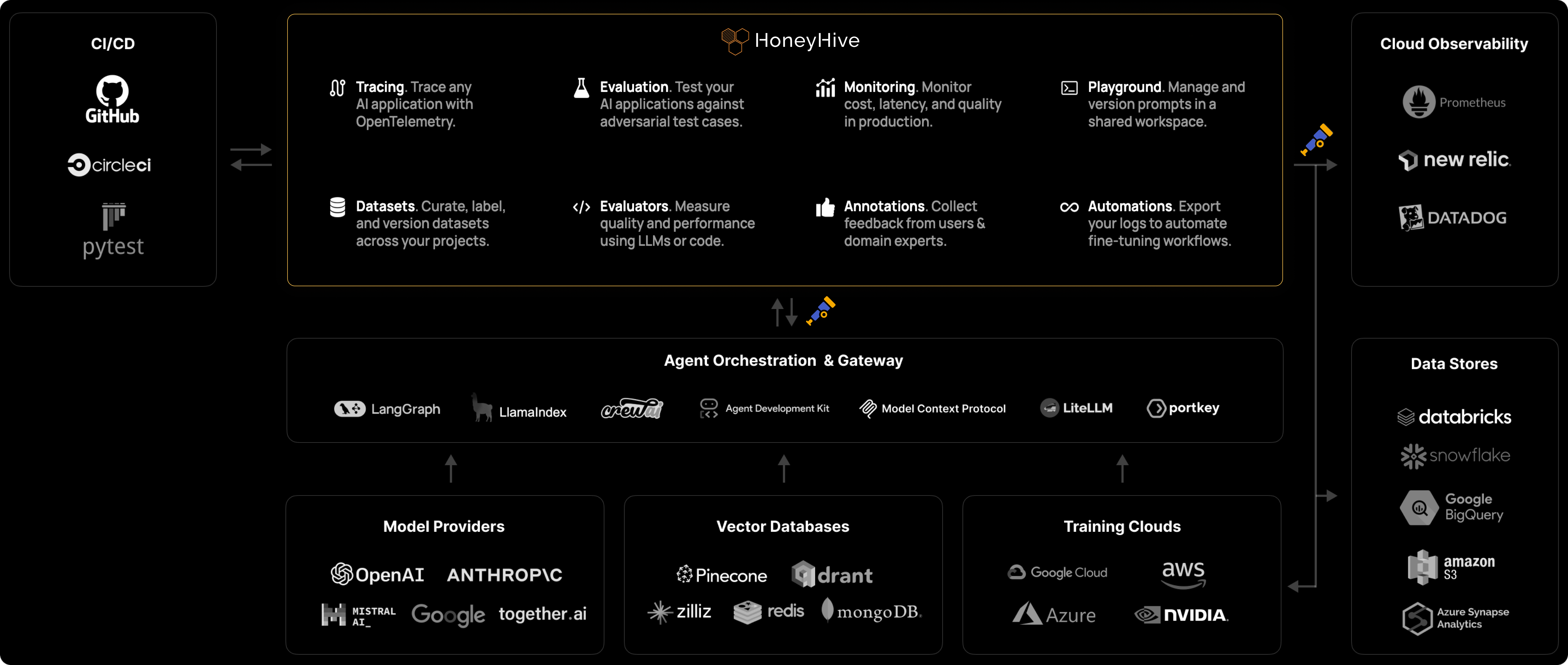

HoneyHive unifies observability and evaluation into a continuous improvement loop, so every team can ship quality agents with confidence.

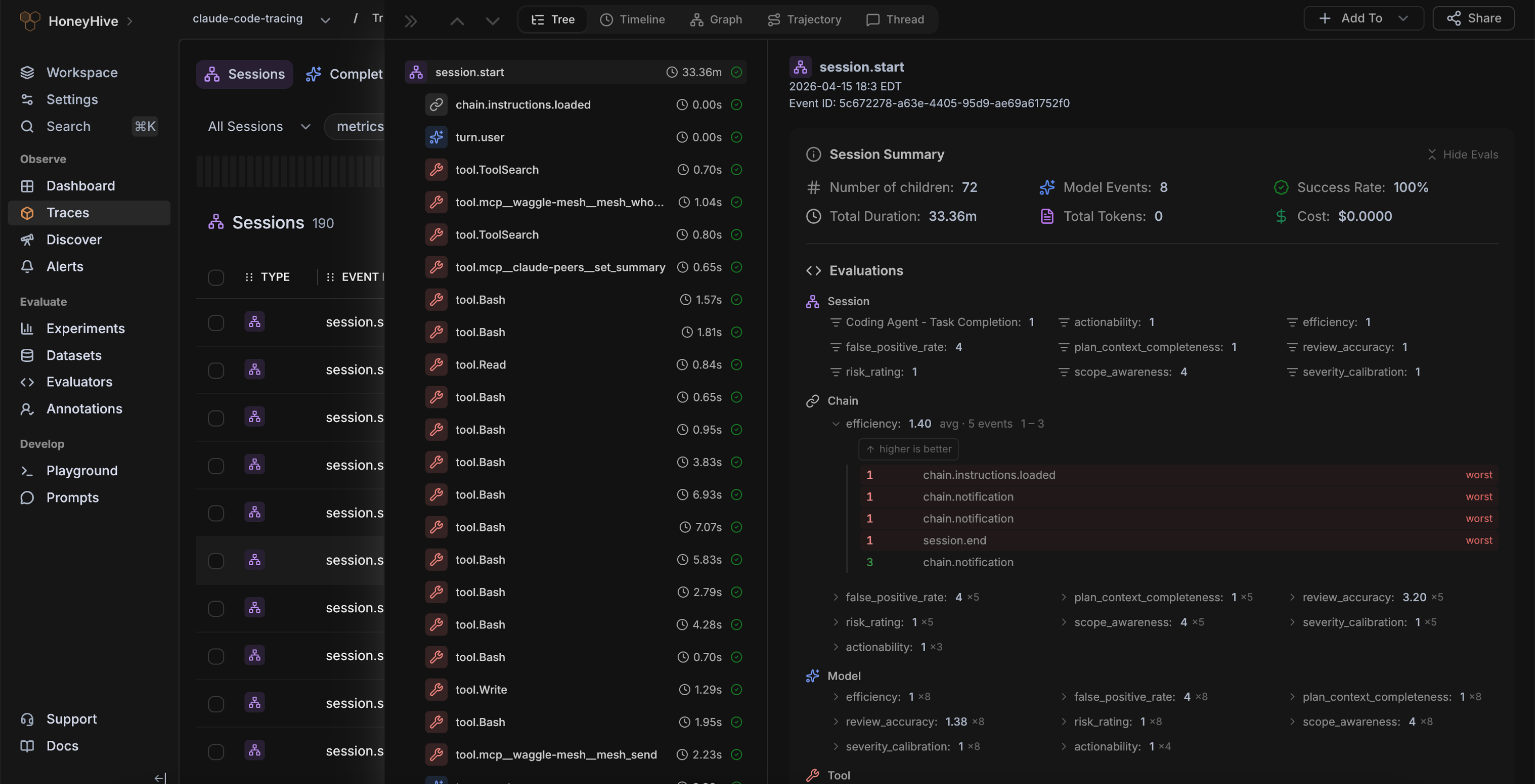

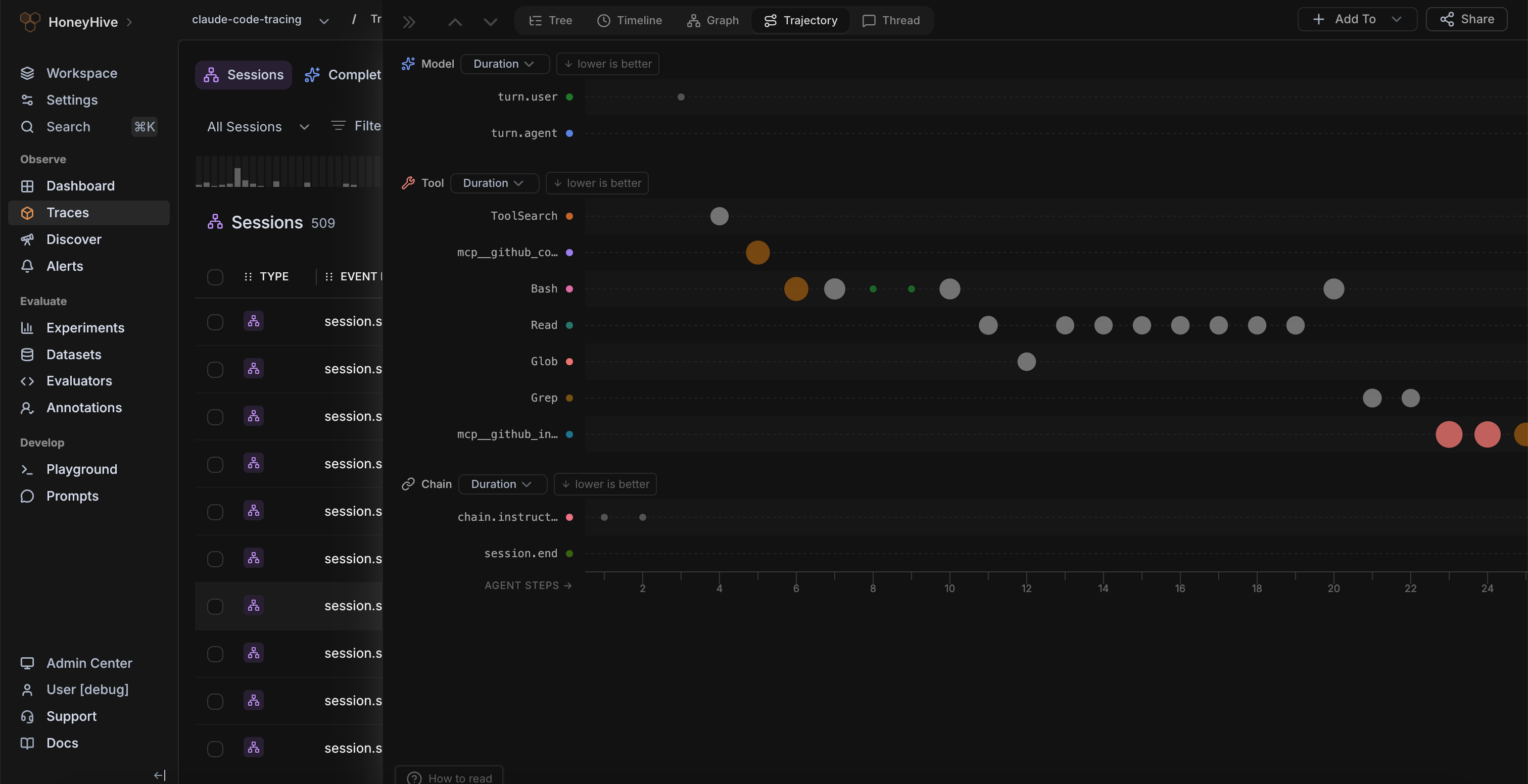

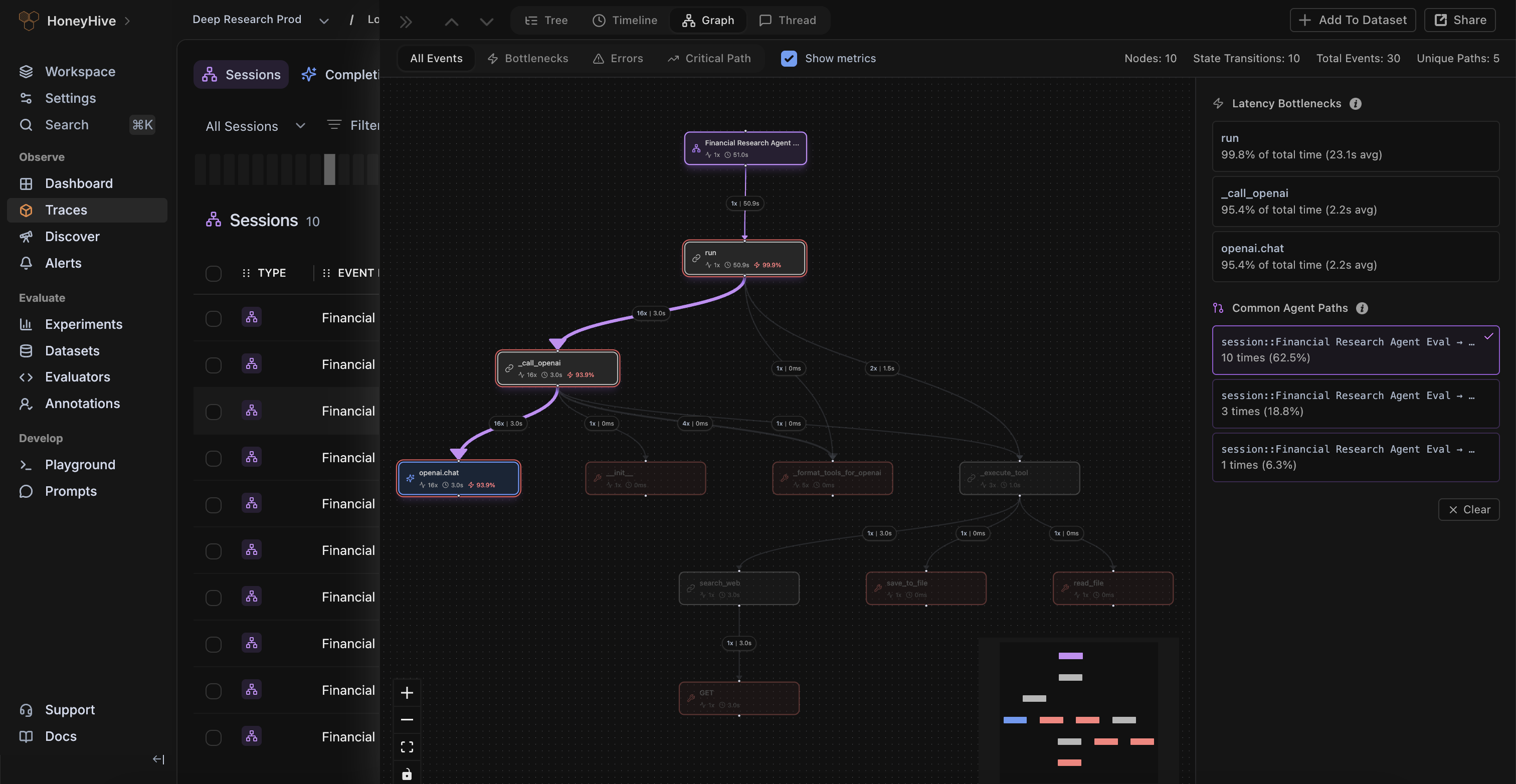

Instrument any agent on any stack with OpenTelemetry — and give every team one shared source of truth to debug agent behavior.

.png)

.png)

.png)

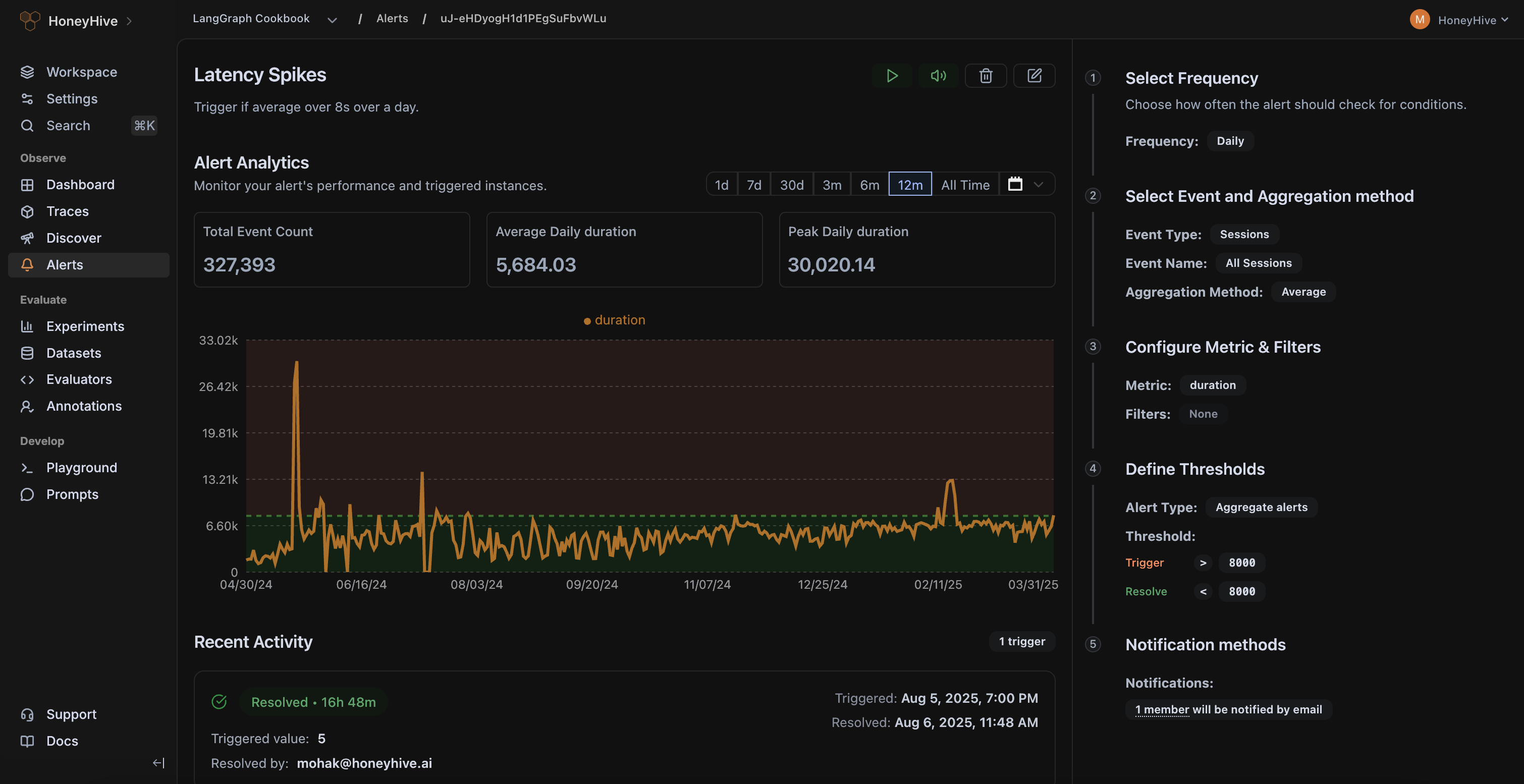

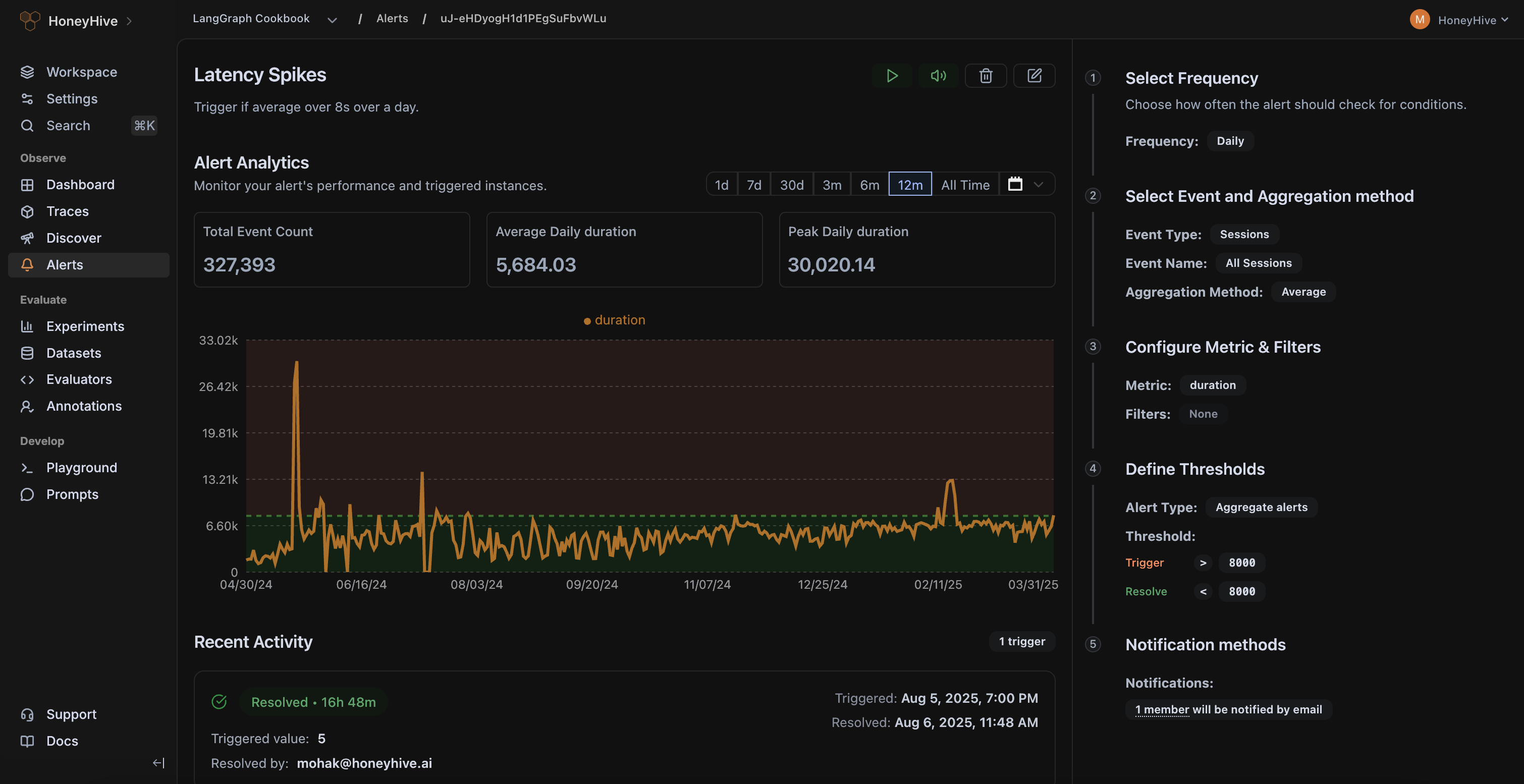

Continuously evaluate live traces, monitor real-world feedback from users, and alert on failures modes that matter to your business.

.png)

.png)

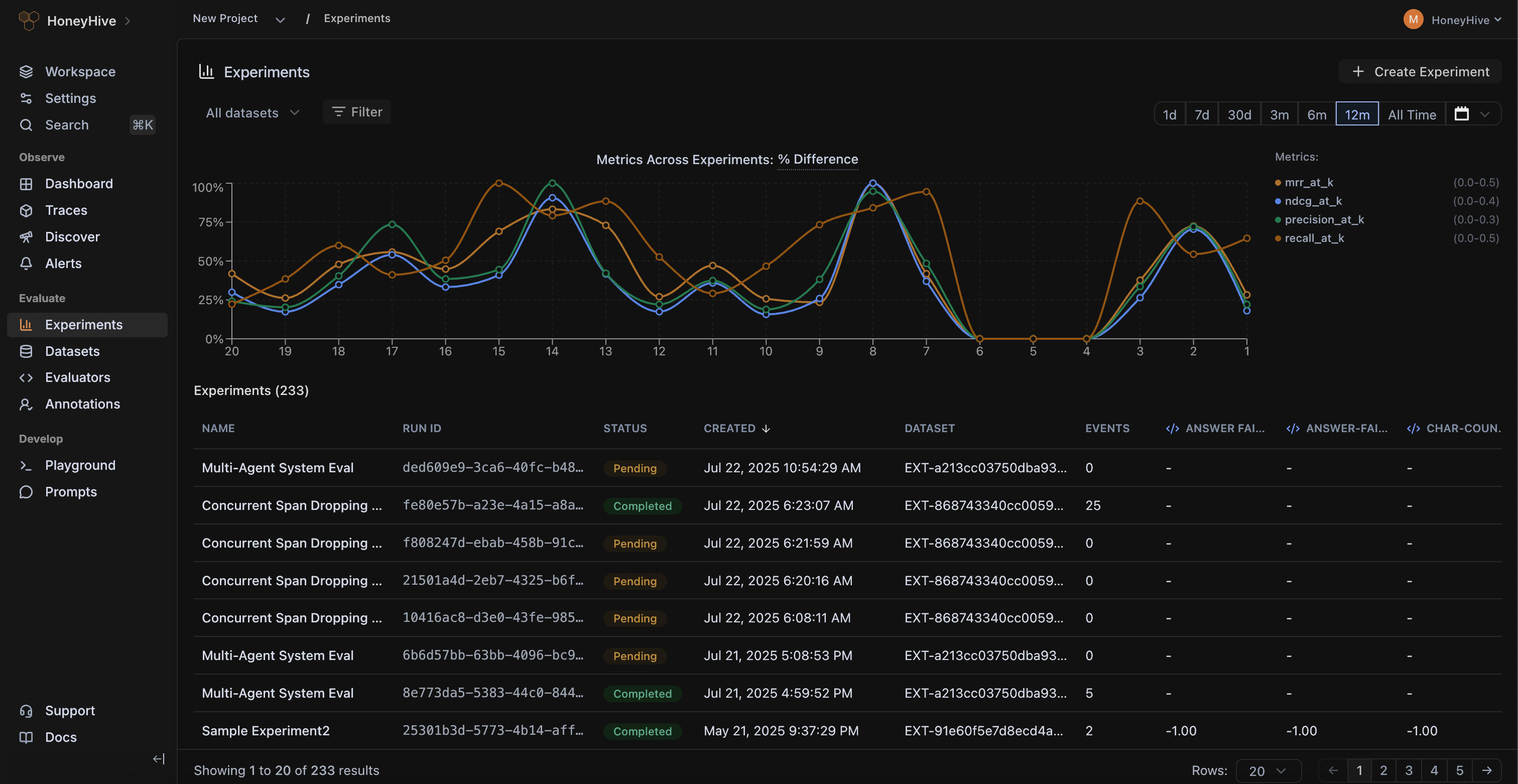

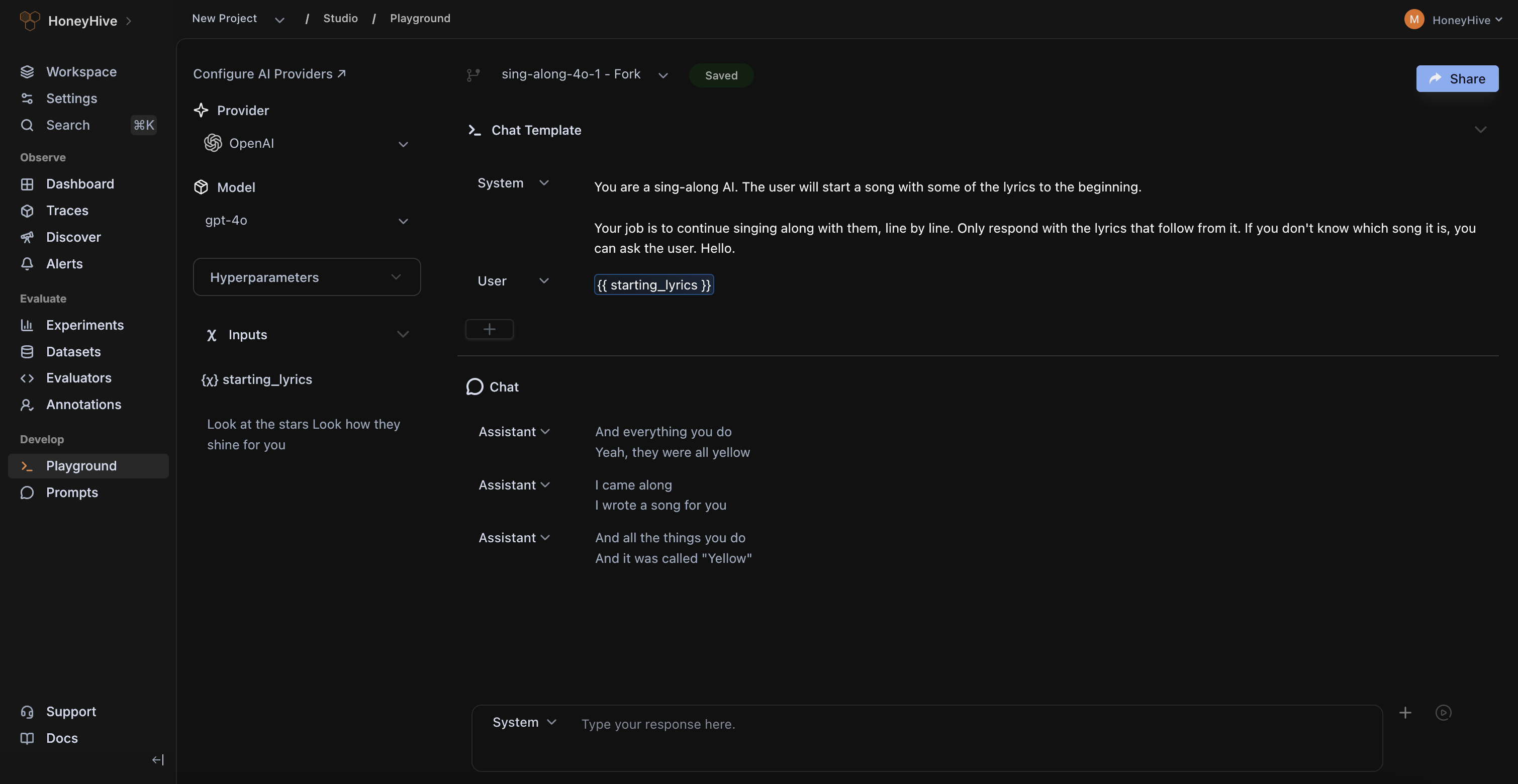

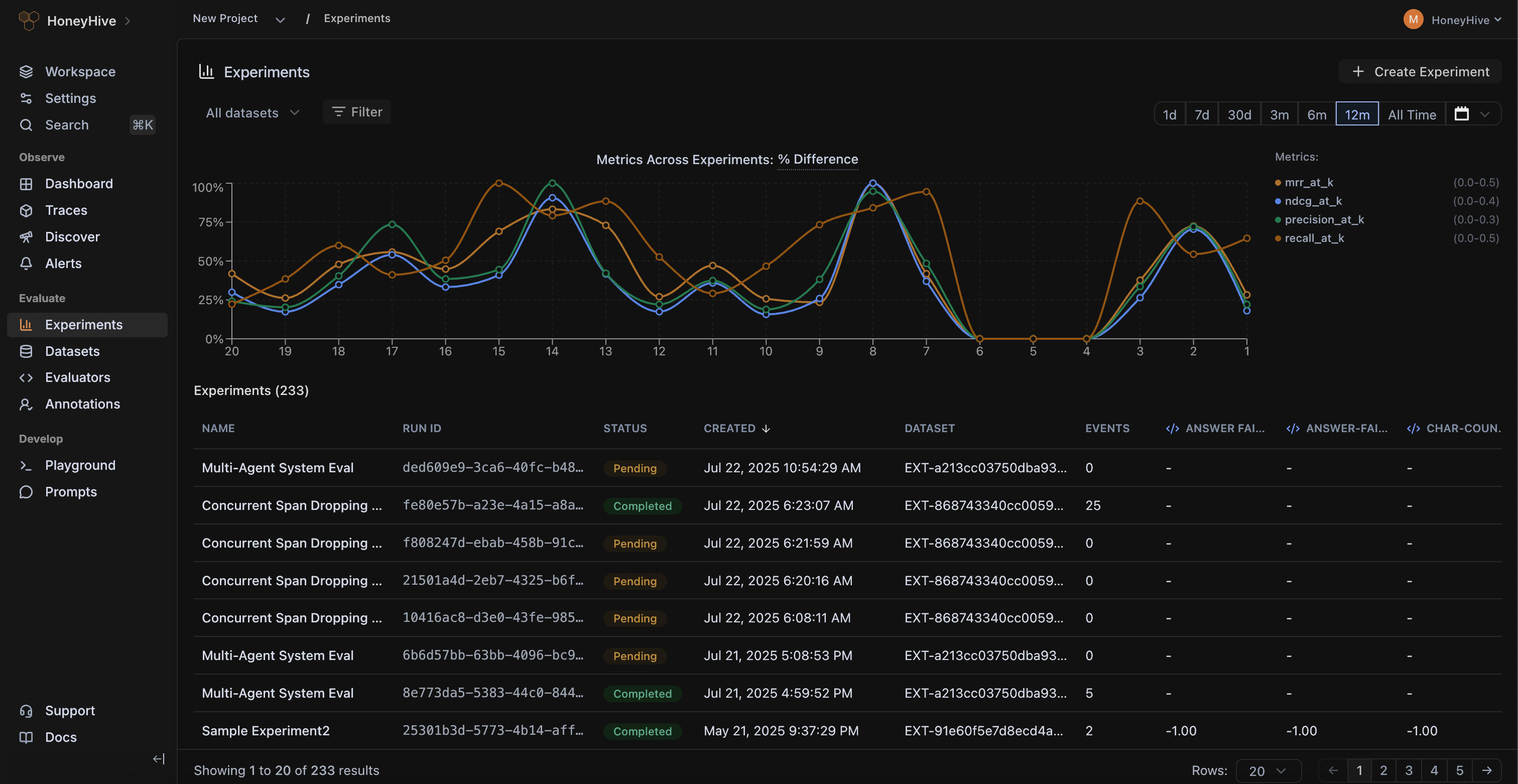

Turn production failures into test suites, compare new changes with baseline, and catch regressions before every release.

.png)

.png)

.png)

Bring subject matter experts into the loop to review edge cases, define quality, and align your evals with real-world business context.

.png)

.png)

.png)

OpenTelemetry-native and framework-agnostic. Get end-to-end visibility across every model, framework, and agent deployed within your company.

HoneyHive powers observability and evaluation across mission-critical AI systems at CBA, enabling safe and responsible deployment of AI agents serving 17M+ consumers.

.jpg)

Agent traces carry rich, highly sensitive I/O that traditional observability can’t handle. HoneyHive is designed specifically for AI traces and keeps your data isolated — logically by default, physically when you need to.

Ready-made skills, a full-feature CLI, and a docs MCP server mean your coding agents can set up tracing, write evals, and drive improvements for you.