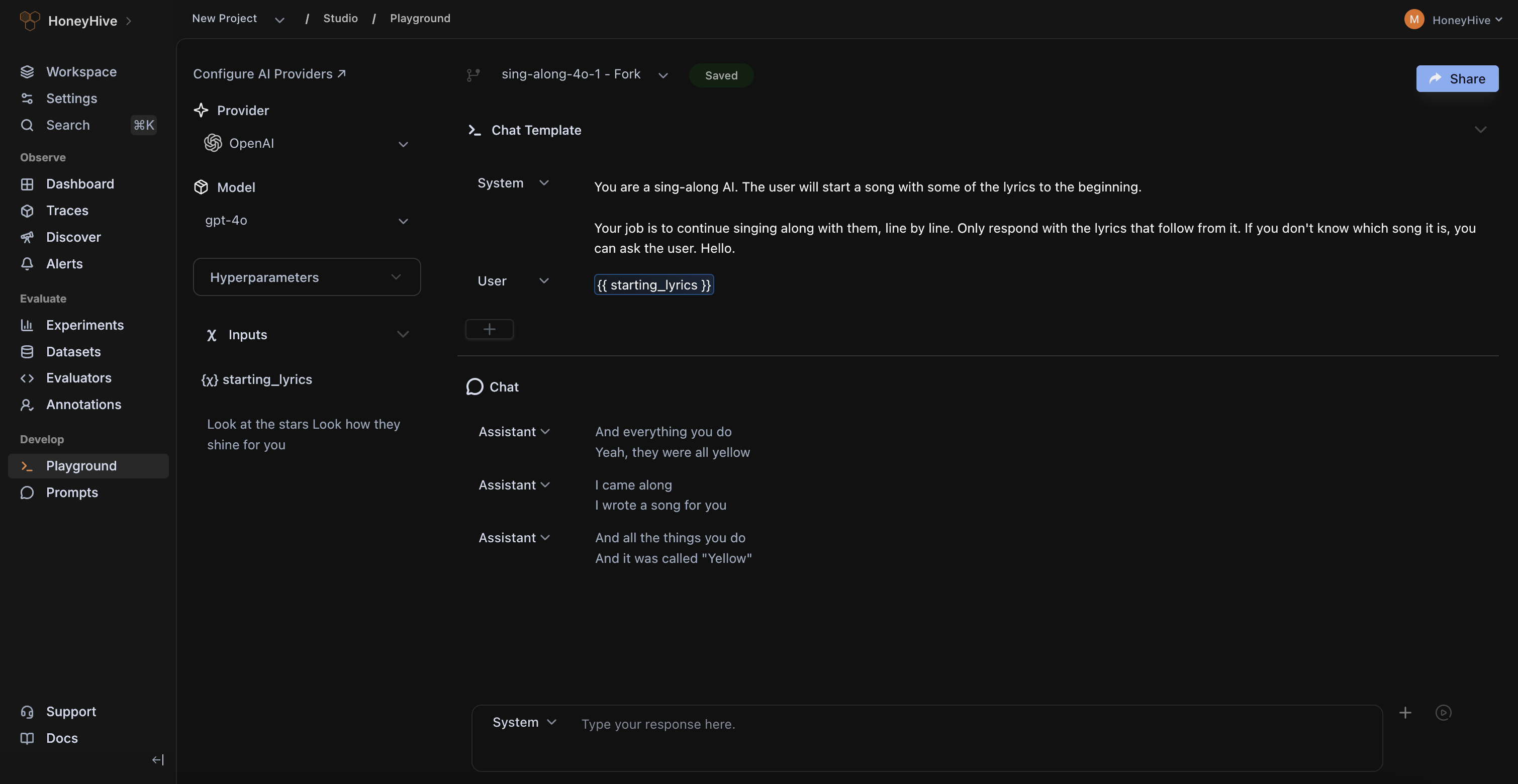

HoneyHive Studio enables your entire team to manage, version, and edit all their prompt templates, model variants, and OpenAI functions within a shared, collaborative workspace.

Our Playground is model-agnostic by design, and comes with native integrations with closed-source model providers and all major GPU clouds.

HoneyHive makes it easy for your entire team to iterate on new prompts, comment on interesting findings and share best-practices.

HoneyHive automatically saves prompt versions as you iterate and run new prompts in the Playground.

Our optional proxy endpoint makes it easy to manage deployments and swiftly roll back changes without touching code.

Use your private or any public data sources in the Playground, with built-in integrations with vector databases and tools like SerpAPI.

Invite domain experts like PMs, CSMs, and financial analysts to contribute to prompt engineering.

.png)

Never lose a good prompt ever again, with automatic version control and history

Use your own private data in the Playground by integrating external tools like Pinecone

Access 100+ closed and open-source models, or integrate your own.

HoneyHive powers observability and evaluation across mission-critical AI systems at CBA, enabling safe and responsible deployment of AI agents serving 17M+ consumers.

.jpg)